This is the second post in a two-part series. Part One here.

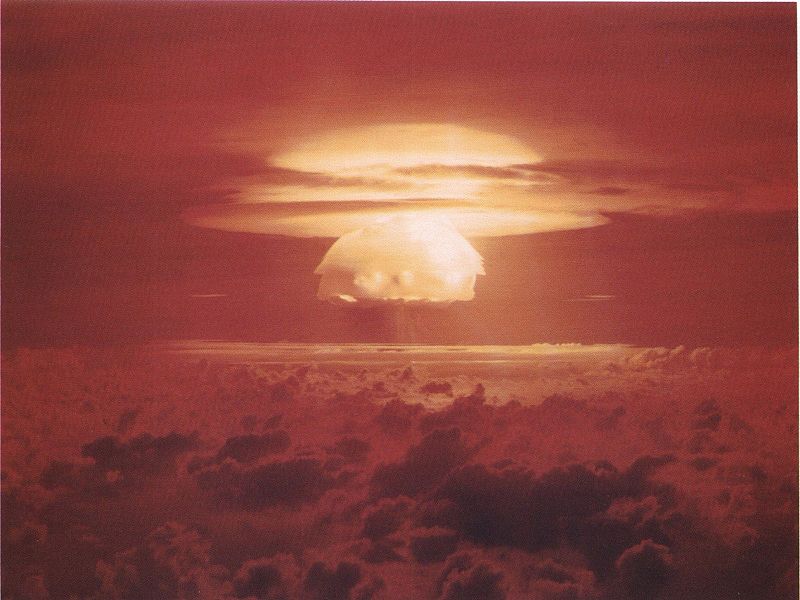

In part one of my post, I made the case that some fairly influential policy experts on nuclear terrorism have no idea what they’re talking about. Or, to be more specific, they’re making predictions with no empirical foundation, because there is no empirical foundation (i.e. no data) to be had.

But, some of these experts are pretty smart people, and they know much more than I do. So, what are they basing their conclusions on? My take is that nuclear terrorism experts are doing something much closer to theory than to research. Or, perhaps a better way to put it is that their research is shot through with possibly true, but in any case highly contestable, assumptions about how the world works. In his book Atomic Obsession, John Mueller does a very nice job of demonstrating how this type of work is done, and he provides his own example. The trick is to construct a model of the steps a terrorist group would have to go through in order to get a nuclear weapon, transport it to the target, and successfully detonate it, while evading the security systems designed to stop them. Many of the steps in the process are fairly pedestrian activities about which a fair amount is known (e.g. smuggling). So, the expert assigns probabilities of success at each step then aggregates the probabilities to generate an overall probability of success.

This procedure is not ridiculous on its face, but it involves tremendous uncertainties at each step of the model-building process. And, at the end of the process, as I said previously, there are no data against which to test the overall adequacy of the model. That makes it nearly impossible to construct compelling tests between, say, Graham Allison’s and John Mueller’s models.

This brings me to my real point, which is that when experts opine about policy, as we are doing here, it’s usually quite a bit like the nuclear terrorism case. Okay, it’s normally not as bad, since most of the time we do have data on the outcome and we can try to fit a model to the data. But, in my experience, expert policy recommendations normally depend on some fairly heroic untested assumptions, whether the recommendations are based on surveys, formal modeling, ethnography, applied statistics, experiments, or generalized area expertise. One of the most useful things experts can do for the public is to help hold other experts accountable by, among other things, explaining these assumptions and how they are used to generate policy recommendations.

Starting a new policy blog, it is worth keeping in mind Philip Tetlock’s findings on Expert Political Judgment: on the whole, it’s pretty bad. Experts can partially ameliorate this problem by keeping in mind Jon Elster’s paraphrase of Socrates: “perhaps humility properly conceived is part of competence.”

0 comments

I guess for me the real question is around the policy effects around the fear of nuclear terrorism, or the effects of someone threatening with a not-really-working nuclear device. It terrorists want to create terror, a “nuclear” device with not very radioactive material that doesn’t kill very many people could unfortunately reach much of the same goal.